by Tim Varelmann

Mixed-Integer Optimization problems are so-called ‘combinatorial optimization problems’. In combinatorial optimization problems, we search for an optimal combination of discrete decisions. If you ever ate a Sandwich at Subway, you are familiar with discrete decisions: In a Subway restaurant, you have a choice between six kinds of bread, sometimes more. You can not choose 30% of one bread and 70% of another in your Sub, so the decision which bread you want is a discrete decision. In combinatorial optimization problems, enumerating all decisions and testing them is, in general, impossible.

In the Subway example, with six kinds of bread, two sizes, thirteen meats, four cheeses, seven veggies (for each of which you make a yes/no decision), nine sauces, salt and pepper (yes/no again), you’d already have over 1.4 million different Subs at hand. Plus, many Subway restaurants offer even more veggies, sauces etc., and if you kindly ask your server, you might get two sauces... The point is, you can not identify your favorite Sub by testing all possibilities. In a Subway restaurant, the decisions are independent of each other. As you know your preferences for bread, you do not have to test all breads, say, with and without olives. Therefore, you can simply build your Sub step by step.

In mathematical optimization, we model decisions including technical, economical and logistical aspects, which depend on each other tightly. Thus, we can not make high-quality decisions by isolating single decisions. Instead of enumerating and testing every single option, we use algorithms that exclude many suboptimal decisions quickly to be able to optimize over a much smaller search space. However, in the worst case, such algorithms fail to rule out suboptimal decisions and still require excessive run time. The practical situation is way better than the worst case. Bob Bixby, the first developer of CPLEX and one of the founders of Gurobi, once put it aptly: “mixed-integer optimization is a bag of tricks”. Although it is impossible to prove that such tricks prevent the worst case, many of these tricks are practically useful to find optimal solutions faster than the wost-case scenario.

In this post, I want to present some tricks that are implemented in mathematical optimization solvers in an accessible manner by pointing out similarities to a task from daily life: matching socks after washing them. This can be quite an annoying task. When the socks are clean, you want to match every single sock into suitable pairs of socks. You want all socks to be part of a matching pair, so you maximize the number of matching pairs. This means you have an optimization problem. And unless your name is “Pippi Longstocking”, or “Dobby the house-elf”, you will have to match your socks carefully.

In the worst case, matching your socks takes a long time. Imagine you pick a sock from the pile and compare it to all others, only to find its partner is the last sock in your comparison. On the hypothecial worst of all days, your next sock has to be compared to all others again, and you are equally unlucky for every pair of socks in your pile. If you wash your socks in a batch of 25 pairs, you’d have to compare the first sock to 49 other socks. After removing the first pair from the pile, you’d have to compare the next sock to 47 remaining socks. Matching the third pair of socks would require 45 comparisons. The second to last pair would require 3 comparisons. Then, only the last pair remains in the pile, but as you had bad luck all day, you want to compare those socks to each other as well, to make sure the washing machine has not eaten any socks. In the end, you had to make

49 +47 +45 + ... + 3 + 1 = 625

comparisons.

Hopefully, you will and have never experienced this scenario when matching your socks. Chances are quite good though, because your perception - like a mixed-integer optimization algorithm - has a bag of tricks to identify matching pairs of socks. Thus, sorting socks cleverly is somewhat similar to mathematical optimization algorithms. Both use tricks to exploit real-world structures in order to finish quickly. We will now examine four such tricks in both use cases, starting from initial problem reduction and moving towards treatment of large-scale problem instances.

Sometimes, we have a pair of really colorful socks which almost extravagantly shine in the pile of socks to sort. Such socks are easy to spot and naturally form the first matched pairs of socks. Human perception highlights bright colors effectively, so to find the counterpart to a very colorful sock, we seldomly have to look through the whole pile.

Also, from a combinatorial perspective, it is effective to do this first. As we have seen, in the worst case, it takes more comparisons to find the first pair than to find any subsequent pair. Since we remove matched pairs from the pile, we will compare socks that are matched later to a smaller pile of remaining socks, which requires fewer comparisons. This means when socks are so colorful that they stand out, matching them first is the most efficient use of their colorful property.

Mathematical optimization solvers also start an optimization with some simple, almost trivial sanity checks on the input model. As more and more models are generated automatically by other pieces of software, seemingly stupid mistakes happen quite frequently. It makes sense to catch such errors early. For example, a good solver would identify redundant constraints in the presolving stage. The possibly simplest redundant constraint is

0T * x ≤ 0.

Another example can be constructed with bounded, non-negative variables x ∈ [xlb, xub] and non-negative coefficients aT, for instance, aT * x ≤ b. If aT * xub ≤ b holds, any solution that respects the bounds of the variables x will respect the constraint, since a and x are non-negative. Then, the constraint can be dropped. It can also be useful to plug in the lower bounds for the variables. When aT * xlb > b, then aT * x ≤ b can never be satisfied, and the solver identifies the model to be infeasible.

Some solvers can also detect that a variable is a simple linear combination of some other variables. Then, they can automatically replace the first variable with a suitable expression in terms of the other variables. Just like matching strikingly colorful socks reduces the pile of socks that is left, presolving ensures that the problem at hand has no unnecessary complications.

After the most colorful pairs of socks have been removed from the pile, a sound strategy is to focus on socks that occur multiple times in the pile. Let’s say from the original 25 pairs of socks, we could remove two colorful pairs of socks, and from the remaining 23 pairs of socks, three pairs are identical socks. If you take one of these socks, there are now five socks in the pile of 45 remaining socks that would match this sock. The worst-case number of thorough comparisons until you find a matching sock does not require 45 comparisons, but just 41 comparisons, as there are at most 40 socks that would not match with your sock. This reduces the worst-case effort, but not significantly.

In practice, what is more relevant is that you reduce the expected number of comparisons when comparing in a random order. I did the exact math while writing this article. Unsurprisingly, the expected number of comparisons required to find the first matching sock is roughly divided by three if there are three same pairs in the pile, and it is roughly halved when there are two pairs of the same sock in a pile. This also means that when the first of the three identical pairs is found - and no other pair of socks occurs multiple times -, finding the second pair immediately afterwards is beneficial. Doing so is still expected to be twice as fast as matching a unique pair.

During clique identification, the mathematical optimization algorithm derives additional constraints that contain useful information for the solution process. Here is an example of a clique:

x1 + x2 ≤ 1

x1 + x3 ≤ 1

x2 + x3 ≤ 1

x1, x2, x3 ∈ {0, 1}.

This group of inequalities (“the clique”) describes that a value of one is allowed for at most one of the three variables. Clique identification can detect this and generate the additional constraint

x1 + x2 + x3 ≤ 1

to explicitly formulate this property. Such a constraint allows other techniques in optimization algorithms to extract the relation between x1, x2, and x3 from just one constraint instead of many constraints. The effect of this is similar to matching multiple socks early: We make our life as easy as possible in the beginning when the problem is still overwhelmingly large.

A note for readers who are familiar with basic mixed-integer optimization: the relaxed solution x1 = x2 = x3 = 1/2 is valid in the continuous relaxation of the original three constraints. However, it is not valid with the additional clique inequality, so the continuous relaxation is also tighter with the clique inequality.

Another intuitive technique we apply when sorting socks is to only compare short socks to other short socks - and long socks to long socks. We usually know our socks so well that we know at a glance if a sock is long or short. Such a glance can circumvent a more detailed comparison. If we compare a short and a long sock, we can abbreviate the comparison by immediately rejecting due to incompatible lengths. Some of you may also separate long socks from short socks. This is related to the last trick of this article in the next section.

A mathematical optimization algorithm uses smart approaches, called heuristics, to find feasible solutions (i.e., solutions that satisfy all constraints). Heuristics do not guarantee to find a feasible solution, but in practice, they are often successful within a short amount of time. An algorithm can therefore spend limited time using heuristics to find new solutions, hoping to find some high-quality solutions throughout the process. The equivalent in sock matching could be to take a short sock and only compare it to other short socks that are visible in the current pile of unmatched socks: You might just find a match and did not even have to touch the pile. However, there is no guarantee for this to work, since the partner sock may also be buried in the pile.

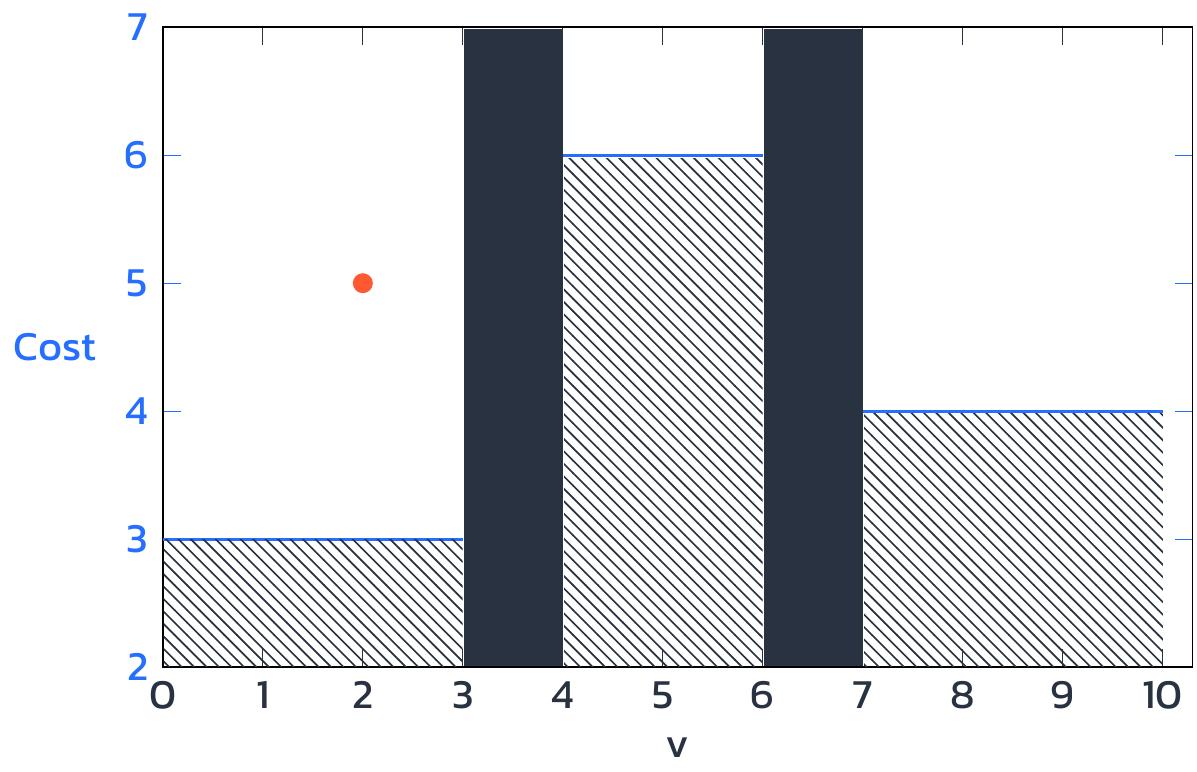

The value of finding a good feasible solution is grounded in the branch-and-bound framework mixed-integer problems are solved with. This means that solvers internally divide the problem into multiple smaller parts, and in each part, a bound for the best possible solution is easy to get. For example, a problem with an integer variable v with bounds 0 to 10 could be divided into three parts: in the first part, v can take values between 0 and 3; in the second part, v can take values between 4 and 6; and in the third part, v can take values between 7 and 10. We are looking for a minimum cost solution. In the first part, the lower bound for the optimal solution is 3. In the second and third part these lower bounds are 6 and 4 respectively. While the optimal solution is unknown, we have a guarantee that no feasible solution incuring a cost lower than these bounds exists.

If now the algorithm finds a feasible point in the first part with an objective value of 5, this is a precious result. Although it is not possible to say whether this solution is optimal, we now have a solution that is better than any solution we could possibly find in the second part of the problem, where the lower bound on minimal cost is 6. So now we know, that v will not have a value between 4 and 6 in the optimal solution. The remaining algorithmic effort can focus on the other parts of the problem. Identifying a good feasible solution has therefore proven a third of the problem irrelevant for the rest of the algorithm. This is also a very important technique to avoid enumerating all values.

Imagine you had to wash not just your socks, but for a family of four or five! If every person used 10 pairs of socks since the last laundry, you would end up with 40 to 50 pairs of socks. With such numbers, the combinatorial growth of the effort required to match the socks is an absolute nightmare. But, there is an elegant way to circumvent this issue: Do not wash the socks of all family members in the same laundry batch. Or, if you want to, have every family member use their own net of socks, so that you end up having to sort five batches of 10 pairs instead of one batch of 50 pairs. In the worst case, we can sort a small batch of 10 pairs using 100 comparisons, resulting in 500 comparisons for five small batches. In contrast, the large batch with 50 pairs of socks requires 2500 comparisons in the worst case, which is a fivefold effort required by exactly the same amount of socks!

Additionally, small batches do not prevent us from applying the tricks we have discussed before. If we can start sorting a batch of 10 pairs of socks by initially matching two very colorful pairs of socks, then find a pair that appears twice before sorting out two pairs of sneaker socks in a batch of otherwise long socks, we only have five pairs of socks remaining. Such a pile can at most require 25 comparisons to match all socks. Thus, we can easily sort five batches of socks even on a bad day and move on with our lives after doing the laundry.

In practical applications of mathematical optimization, models describing the decisions to be made can quickly become large. However, in large-scale problems, some loosely dependent components can often be identified. An expert in mathematical optimization can treat these components as separate optimization problems and come up with a smart algorithm to make sure the interdependencies between the components do not get lost. In fact, I have written my entire PhD Thesis by developing suitable decompositions for real-life problems. Such an expertise is not required when matching socks. To ensure there is no coupling between the individual batches of socks, it suffices to only put matching pairs of socks into the same batch before the laundry.

As with small piles of socks, the worst-case runtimes of many small problems lead to a total runtime much smaller than the worst-case runtime of one large problem. Again, the other tricks, from presolving to using powerful heuristics, can still be applied in smaller problems and reduce the practical runtime even more. So the benefits of multiple tricks stack up, just like they do when matching socks.

If you need help with automating tedious decision-making in a more profound area than matching socks, this is the right place. Mathematical optimization and its tricks apply to a broad variety of problems, including energy cost reduction, production scheduling, or logistical challenges. Tell me about your problem and I will share my expertise with vivid explanations. We might reduce the effort to make an optimal decision, so that pushing a button is all you need to do.

Thanks to Karsten Paul for proofreading this article and providing valuable feedback.